At Rewind, we generally subscribe to the “treat your servers like cattle, not pets” mantra yet we still have the occasional requirement to log in to EC2 instances using SSH. We had instances locked down fairly tight using security groups and only allowing SSH from a VPN source IP, which worked well, but we still had the problem of shared keys (AWS Elastic Beanstalk uses a single SSH key for all instances in the Beanstalk). Surely there has to be something better for managing access control for shell sessions?

There is! AWS Systems Manager Session Manager (let’s call it session manager for short.) Session manager allows one to make an interactive shell connection to an EC2 instance with several key features:

- Instances in private subnets can be connected to even without a NAT gateway being present. VPC endpoints to Systems Manager are all that is required.

- No inbound security group rules are required for public instances — all communication is via the Systems Manager service.

- All user sessions and commands are logged to Cloudwatch logs (or S3) with optional encryption via KMS.

- Access control is via standard IAM policies and can be configured for tag based resource access.

- As a huge bonus, SSH can be tunnelled over a session manager session! This can be used to provide access to private RDS databases.

There are many great articles on how to set up and configure session manager (not the least of which is the official AWS blog post which introduced the feature). Rather than rehash setup, I’ll cover a few items which we needed to customize. Namely:

- Session manager logging

- How we’ve setup session manager access control with IAM

- Some custom tooling we wrote around session manager

- A custom Elastic Beanstalk extension to manage keys for SSH tunnels over session manager sessions

Session Manager Access Control

Session manager access control is configured using standard IAM policies. In our use case, we tag everything in AWS using several dimensions so tags are a great way to easily grant access. We have several policies in place depending on the access required for a specific user. Here is one example that would allow a user to establish a session with any instances tagged with platform = acme

{

"Version": "2012-10-17",

"Statement": [

{

"Sid": "SessionManagerStartSession",

"Effect": "Allow",

"Action": "ssm:StartSession",

"Resource": [

"arn:aws:ec2:*:*:instance/*",

"arn:aws:ssm:*::document/AWS-StartPortForwardingSession"

]

"Condition": {

"StringLike": {

"ssm:resourceTag/platform": "acme"

}

}

},

{

"Sid": "SessionManagerPortForward",

"Effect": "Allow",

"Action": "ssm:StartSession",

"Resource": "arn:aws:ssm:*::document/AWS-StartPortForwardingSession"

},

{

"Sid": "SessionManagerTerminateSession",

"Effect": "Allow",

"Action": [

"ssm:TerminateSession",

"ssm:ResumeSession"

],

"Resource": "arn:aws:ssm:*:*:session/${aws:username}-*"

}

]

}

The policy allows users to start a session or a port forwarding session (tunnel) where an EC2 instance has a tag platform=acme.

The second block of the policy allows users to start a session that will allow SSH tunnelling over a session. This block works in conjunction with the first block so users can still establish sessions exclusively with the instances tagged with platform=acme

The third block of the policy allows users to terminate their own sessions. Sessions are named with the IAM user and a unique token so we can make use of the “special” IAM variable {$aws.username} to return the current user.

One gotcha in the third block is having to add in the ssm:ResumeSession action. Without this, users who are terminating an SSH tunnel over session manager will see their aws cli hang.

Custom Tooling

Establishing a session manager shell is straightforward using the AWS cli:

aws ssm start-session --target "i-01234567abcdefg"

For an SSH tunnel, the syntax is:

aws ssm start-session --target "i-01234567abcdefg"

--document-name AWS-StartPortForwardingSession

--parameters '{"portNumber":["22"],"localPortNumber":["9999"]}'

At Rewind, we use Elastic Beanstalk for most of our services. As such, we are big fans of the eb cli. Switching back to using the instance ID was a little less friendly so we combined a couple of small tools together to make session manager easier to use. One of these is open source and the other is in a private repo with some Rewind specific parts to it.

aws-connect

aws-connect is a small CLI wrapper around the AWS cli that allows users to specify connection endpoints by instance Name tags rather than instance id. If I have a series of EC2 instances with a Name tag of web-server-tier, I can execute:

aws-connect -n web-server-tier

aws-connect then establishes a session manager shell connection to the first instance found with Name=web-server-tier. I can add the -a tunnel flag to create an SSH tunnel instead, or if I want a specific instance, I can add a -s flag to be given a list of instances with matching tags.

We also have another small wrapper around aws-connect called rewind-connect which understands Rewind infrastructure and prompts the user in platform-specific terms they are familiar with. This provides another way to zero in on a specific instance id before itself calling aws-connect. We distribute this internally with a private homebrew tap.

Synchronizing SSH keys for Elastic Beanstalk

One of the benefits to session manager is no longer being required to manage SSH keys. That being said, SSH tunnels do require keys and we’d rather not use the shared key provided by Elastic Beanstalk. How do we deal with this? There was a fantastic StackOverflow solution posted by Sam Maleyek. I made some tweaks to it and converted it into a full Elastic Beanstalk extension. Let’s look at the extension and what it does (this would saved to $project_root/.ebextensions/sync_ssh_keys.config or something similar)

files:

"/home/ec2-user/get-ssh-keys.sh":

mode: "000755"

content: |

#!/bin/bash# Only users in this group will have their keys sync'ed to instances

iam_group_allowed_users=beanstalk-ssh-usersauth_file=/home/ec2-user/.ssh/authorized_keys

tempfile=/home/ec2-user/.ssh/authorized_keys.tmp.$$logMsg()

{

INITPID=$$

PROG="get-ssh-keys"

logger -t ${PROG}[$INITPID] $1

echo $1

}# Delay the start so we don't get a storm of instances crushing IAM

sleep_duration=$(( (RANDOM % 10) + 1 ))

logMsg "Delaying startup by ${sleep_duration} seconds"

sleep ${sleep_duration}s# Save off the "root" beanstalk SSH key if we have not already done so

if [ ! -e "/home/ec2-user/.ssh/root_key" ]; then

logMsg "Saving beanstalk root key to /home/ec2-user/.ssh/root_key"

head -1 "/home/ec2-user/.ssh/authorized_keys" > "/home/ec2-user/.ssh/root_key"

fi# Set the perms now or SSH will fail

touch "${tempfile}"

chmod 600 "${tempfile}"

chown ec2-user:ec2-user "${tempfile}"# Always start off with the "root" key

logMsg "Initializing ${tempfile} with beanstalk root SSH key"

cat "/home/ec2-user/.ssh/root_key" > "${tempfile}"allowed_users=$(aws iam get-group

--group-name "${iam_group_allowed_users}"

--query 'Users[].UserName'

--output text)for iam_user in ${allowed_users}

do

logMsg "Retrieving SSH keys for user ${iam_user}"ssh_key_ids=$(aws iam list-ssh-public-keys

--user-name "${iam_user}"

--query 'SSHPublicKeys[?Status==`Active`].SSHPublicKeyId'

--output text)if [ -n "${ssh_key_ids}" ]; then

for ssh_key_id in ${ssh_key_ids}

do

logMsg "Retrieving public key for ${iam_user} with ID ${ssh_key_id}"ssh_key=$(aws iam get-ssh-public-key

--encoding SSH --user-name "${iam_user}"

--ssh-public-key-id "${ssh_key_id}"

--query 'SSHPublicKey.SSHPublicKeyBody'

--output text)echo "${ssh_key}" >> "${tempfile}"

done

else

logMsg "No active SSH keys found for user ${iam_user}"

fi

done# Drop in the new authorized_keys file

logMsg "Replacing ${auth_file} with ${tempfile}"

mv "${tempfile}" "${auth_file}""/tmp/get-ssh-keys":

mode: "000644"

owner: root

group: root

content: |

*/10 * * * * root /home/ec2-user/get-ssh-keys.sh > /dev/null 2>&1container_commands:

01update_cron:

command: "mv -f /tmp/get-ssh-keys /etc/cron.d"

02postinit_hook:

command: "cp -f /home/ec2-user/get-ssh-keys.sh /opt/elasticbeanstalk/hooks/postinit/12_get-ssh-keys.sh"

Let’s have a look at the interesting parts…

iam_group_allowed_users=beanstalk-ssh-users

We will only synchronize SSH keys for users who are a member of this IAM group.

head -1 "/home/ec2-user/.ssh/authorized_keys" > "/home/ec2-user/.ssh/root_key"

Always save the root Elastic Beanstalk SSH key to fallback on should we somehow lock out all users.

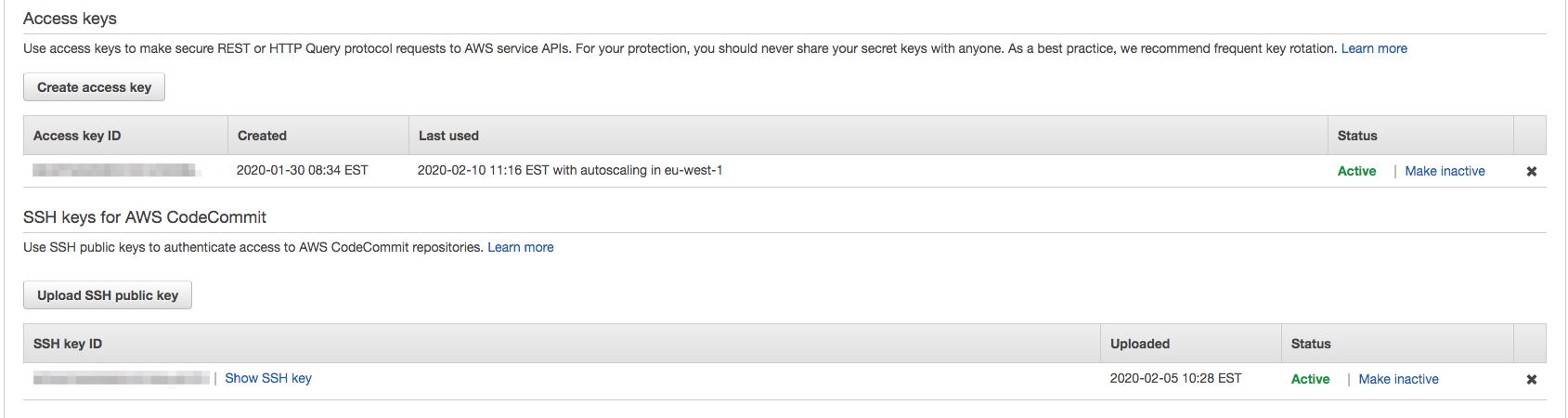

ssh_key_ids=$(aws iam list-ssh-public-keys

--user-name "${iam_user}"

--query 'SSHPublicKeys[?Status==`Active`].SSHPublicKeyId'

--output text)

This is the crux of the solution. Here, we get all active public SSH key IDs for a specific user.

IAM allows uploading SSH public keys to an IAM user for use with CodeCommit. But nothing says we can only use them with CodeCommit! These keys can all be managed by the users themselves and rotated like IAM keys. Perfect!

ssh_key=$(aws iam get-ssh-public-key

--encoding SSH --user-name "${iam_user}"

--ssh-public-key-id "${ssh_key_id}"

--query 'SSHPublicKey.SSHPublicKeyBody'

--output text)

Next, we can get the actual public key and write it to the authorized_keys file on the instance

container_commands:

01update_cron:

command: "mv -f /tmp/get-ssh-keys /etc/cron.d"

02postinit_hook:

command: "cp -f /home/ec2-user/get-ssh-keys.sh /opt/elasticbeanstalk/hooks/postinit/12_get-ssh-keys.sh"

Finally, we will synchronize the keys every 10 minutes in a cron on the instance. We also add to a Beanstalk postinit hook in order for the keys to be synchronized right away at boot time, rather than potentially waiting for the first run of the cron.

Wrapping up

Having worked in tech for 25 years, two principles have really stuck with me in regards to security:

- Defence in Depth: The more layers you can implement to secure your stuff, the better. Session manager has allowed us to remove any SSH access and shared keys. Combined with full session logging and IAM, we have a much more secure access model for the times when we need shell access to an instance

- Security is not convenient: A security officer told me this over 20 years ago. I’ve generally found this to hold true over the course of my career, however, AWS session manager is one of the rare exceptions. Session manager is both secure and convenient!

Dave North">

Dave North">